TL;DR - Read This First

American consumers lost $12.5 billion to fraud in 2024-2025, a 25% jump in a single year. Biometric payment platforms were supposed to fix this - but single-modal systems (face-only or fingerprint-only) are increasingly cracked by AI tools including deepfakes, voice cloning, and camera injection attacks. The answer is not just "add more biometrics." It is about how the AI fraud models sitting on top of those biometric signals are built, trained, and maintained. This article covers the six things that actually move the needle: liveness detection quality, fusion strategy, adversarial training, behavioural signals, model drift monitoring, and standards compliance (ISO 30107-3).

Key Statistics at a Glance

Why AI Fraud Is Winning - For Now

Biometric payments were positioned as the solution to password-based fraud. And for a while, they were. But the fraud environment has shifted in a fundamental way: the same AI that makes face recognition accurate also makes it possible to generate a convincing fake face. The same voice models that authenticate a customer can be cloned from 30 seconds of audio on LinkedIn.

The scale of the problem is not theoretical. In 2024, injection attacks against biometric systems surged nine times, with virtual camera exploits - where fraudsters replace a real camera feed with a deepfake video - spiking 28 times year-on-year. 70% of people are moderately or extremely worried about generative AI-based fraud and deepfakes. And yet most biometric platforms still run single-modal AI models trained on clean, non-adversarial data.

The core issue is not the biometrics themselves. It is the AI fraud models wrapped around them. A fingerprint scanner is only as fraud-resistant as the liveness detection and anomaly scoring behind it.

The Threat Gap

99% of financial institutions now use AI to fight AI-driven fraud - but bad actors share intelligence and update attack methods faster than most institutions can retrain their models. This creates a lag window that fraudsters actively target.

What Is AI Fraud in a Biometric Payment Context?

AI fraud in biometric payment platforms refers to attacks that use machine learning tools to bypass, spoof, or fool the biometric layer of a transaction. The six main attack types you need to know are:

| Attack Type | How It Works | Risk Level |

|---|---|---|

| Deepfake face injection | An AI-generated video of a real person's face is fed into the camera stream, bypassing face recognition | CRITICAL |

| Voice cloning | A synthetic voice model built from publicly available audio is used to pass voice authentication | CRITICAL |

| Synthetic identity | Fragments of real identity data combined with AI-generated documents create a fake identity that passes KYC | CRITICAL |

| Presentation attack (PAD) | Physical artefacts like printed photos, silicone masks, or 3D-printed fingerprints used at the sensor | HIGH |

| Camera injection / virtual camera | Real camera input is replaced at the software driver level with a fraudulent feed | CRITICAL |

| Adversarial ML input | Subtle pixel-level changes to genuine biometric input designed to confuse the ML classifier | HIGH |

Each of these requires a different defensive layer in your AI fraud model. No single technique neutralises all of them - which is exactly why a layered architecture matters.

Why Single-Modal Biometrics Is Not Enough

A fingerprint scanner, in isolation, has a known weakness: it cannot tell the difference between a live finger and a high-quality silicone replica without an additional liveness check. A face recognition system, on its own, is increasingly unable to distinguish a real face from a well-rendered deepfake - especially if the model was trained before 2023, when deepfake tooling became widely accessible.

This is not a hypothetical edge case. In regions like Africa, AI-generated selfie anomalies and deepfake attempts increased seven times during 2024 alone. Globally, only 0.1% of people can correctly identify all AI-generated content, according to iProov research - which tells you something important about trusting human review as a backup.

"Multimodal biometric systems combining facial and fingerprint recognition provide 96% higher security than single-mode systems in 2025."

- CoinLaw Biometric Payment Authentication Statistics

The logic of multimodal systems is straightforward: when you force an attacker to simultaneously spoof two independent biometric signals - say, a face and a fingerprint, or a voice and an iris scan - the attack surface grows exponentially harder to beat. A deepfake face does not give you a spoofed fingerprint for free.

The Six Pillars of a Stronger AI Fraud Model

-

Liveness Detection - Your First and Most Critical Layer

Liveness detection answers one question: is the biometric input coming from a real, physically present person right now? Without it, every downstream fraud model is working on potentially poisoned data.

There are two approaches - active and passive. Active liveness asks the user to blink, smile, or move their head. It is harder to spoof but adds friction. Passive liveness works silently in the background, analysing texture, depth cues, micro-movements, and subtle inconsistencies that reveal a fake input. For payment platforms where user experience matters, passive liveness is increasingly the standard.

The benchmark for liveness detection quality is ISO/IEC 30107-3, the international standard for Presentation Attack Detection (PAD). Certification levels matter:

PAD Level What It Covers Level 1 - Printed photos, basic video replays (max artefact cost $30) Baseline protection against low-effort attacks. Suitable for low-risk transactions. Level 2 - Advanced deepfakes, 3D masks, silicone replicas (max artefact cost $300) Required for financial services and high-value transactions. This is the target for any biometric payment platform. Procurement Note

NIST evaluations - while the gold standard for biometric accuracy - do not assess resilience against deepfakes or camera injection attacks. For full-spectrum PAD, look for iBeta Level 2 certification, which is based on ISO 30107-3 and issued by a NIST/NVLAP-accredited lab.

-

Fusion Strategy - Where Most Platforms Get It Wrong

Multimodal biometrics only delivers its security advantage if the scores from different modalities are combined intelligently. There are three main fusion approaches, and the choice has a direct impact on fraud resistance:

-

Score-level fusion

Each modality produces an independent score, and those scores are mathematically combined before a pass/fail decision is made. This is the most common approach and offers a good balance of security and simplicity.

-

Feature-level fusion

Raw feature data from multiple biometric sensors is merged before classification. This produces higher accuracy but requires all modalities to be captured at the same time, which creates practical constraints in payment flows.

-

Decision-level fusion

Each modality makes its own accept/reject decision independently, and the final outcome is determined by a voting or logic rule. Easier to implement but more vulnerable to a single compromised modality overriding the others.

For AI fraud model resilience, score-level fusion with adaptive weighting is currently the most defensible approach. It allows the system to down-weight a compromised modality in real time if anomaly signals are detected - rather than treating each modality as equally reliable at all times.

-

-

Adversarial Training - The Most Under-Invested Area

Most biometric fraud models are trained on clean, real-world data. That is fine for accuracy. It is not fine for security.

An adversarially trained model is deliberately exposed to attack patterns - deepfake images, synthetically generated fingerprints, manipulated voice samples - during training, so that it learns to recognise the subtle artefacts these attacks leave behind. A standard face recognition model and an adversarially hardened one will perform similarly on legitimate users. But when a GAN-generated face hits the adversarially trained model, it has a much higher chance of being flagged.

What the Numbers Say

AI models trained with adversarial techniques and behavioural signals have shown up to 85% reduction in false positives while maintaining high fraud detection rates. The U.S. Treasury's machine learning AI programme prevented and recovered over $4 billion in fraud in FY2024, up from $652.7 million the year before - a 513% improvement in a single year.

-

Behavioural Biometrics as a Continuous Signal

Behavioural biometrics is one of the most underused tools in payment fraud prevention. Where physical biometrics verify who you are at a point in time, behavioural biometrics monitor how you interact - typing speed, touch pressure, swipe patterns, mouse movement, even device orientation - across the entire session.

This matters for AI fraud because it adds a time-series dimension that is extremely hard to fake. A fraudster who successfully spoofs a face scan cannot simultaneously replicate the subtle behavioural fingerprint of the legitimate account holder during the payment process itself.

Behavioural biometrics platforms have achieved a 15% reduction in false positives in 2025, while identifying account takeover attempts that cleared the initial biometric check. In one benchmark, behavioural analytics combined with biometrics could stop fraud attempts within 5 milliseconds.

-

Model Drift Monitoring - The Problem Nobody Talks About

A fraud model that was accurate in January may be significantly less accurate by September. Fraud patterns change. Attack tools improve. User populations shift. If your AI fraud model is not continuously monitored for distributional drift, it will go stale without any visible alarm.

Drift monitoring should be a non-negotiable part of any production fraud detection pipeline. The specific things to watch are:

Input feature drift - are the raw biometric signal characteristics changing from the training baseline?

Score distribution drift - are fraud scores shifting upward or downward across your population?

False positive and false negative rate trends over rolling 30-day windows

Attack type distribution - are you seeing new attack patterns that are underrepresented in training data?

The cadence for retraining depends on your threat environment. For high-volume payment platforms in markets with active fraud ecosystems, quarterly retraining cycles are a minimum. Monthly is better.

-

Explainability and Audit Trails - Now a Regulatory Issue

Black-box fraud models are increasingly a compliance liability, not just a technical limitation. Regulators across major markets - including requirements under India's data protection framework, Europe's AI Act, and PCI-DSS requirements - are placing growing pressure on financial institutions to explain why a transaction was declined or flagged.

For AI fraud models in biometric payment platforms, this means two things in practice. First, the fraud scoring logic needs to be interpretable at the decision level - you should be able to say which signals contributed to a high fraud score. Second, every fraud decision needs a timestamped, immutable audit trail that can be produced in a regulatory review or customer dispute.

Explainable AI (XAI) approaches like SHAP values and attention maps in deep learning models make this achievable without sacrificing model performance. The cost of implementing explainability is far lower than the cost of a regulatory enforcement action.

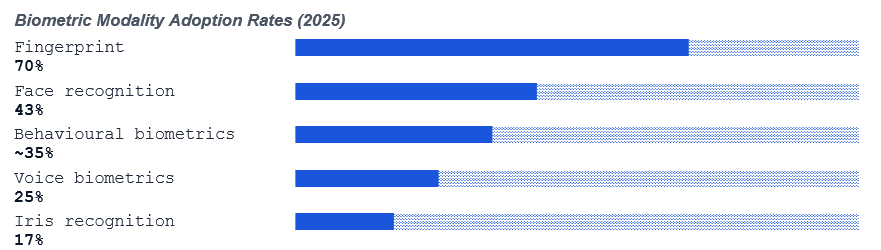

What the Data Says About Modality Adoption

Sources: CoinLaw Biometric Payment Authentication Statistics 2025; iProov Biometric Statistics 2025.

The picture this data paints is clear: most deployments are still running single or dual modality. This is exactly the gap that AI fraud attacks are being designed to target. The growth in AI-driven biometric fraud is not outpacing the technology - it is outpacing the deployment of the technology's best features.

A Practical Implementation Checklist

If you are responsible for a biometric payment platform and want to move from where most deployments are today to where they need to be, this is the sequence that delivers the most risk reduction per unit of effort:

-

Audit your current liveness detection certification

If your face or fingerprint component is not iBeta Level 2 certified against ISO 30107-3, that is your first priority. No other fraud model hardening matters if the biometric input itself can be trivially spoofed.

-

Add camera integrity verification

Given the 28x spike in virtual camera exploits in 2024, camera injection detection needs to be a standard component, not an optional add-on. This checks that the video stream is coming from a real hardware device and has not been replaced at the software layer.

-

Move from decision-level to score-level fusion

If you are running multiple biometric modalities with separate accept/reject gates, consolidate them into a unified score-level fusion layer with adaptive weighting based on modality reliability signals.

-

Introduce adversarial examples into your training pipeline

Partner with a red team or use generative AI tools to produce synthetic attack examples - deepfakes, mask artefacts, synthetic fingerprints - and incorporate them into your next model training cycle.

-

Layer in behavioural biometrics post-authentication

The session does not end when the biometric check passes. Behavioural monitoring across the payment flow catches account takeovers that cleared the initial authentication gate.

-

Set up model monitoring with automated drift alerts

Instrument your production fraud model to emit daily distributions of key features and scores. Set alert thresholds that trigger a review when drift exceeds a defined threshold. Establish a retraining cadence matched to your threat environment.

The Regulatory Context You Cannot Ignore

Strengthening AI fraud models in biometric payment platforms is not only a security decision - it is increasingly a compliance necessity. Key reference points for 2025 and beyond:

97% of fraud management experts agree that stronger international cooperation to share intelligence is needed to combat financial fraud, according to BioCatch's 2024 survey. Within your own organisation, fraud signals should not live in silos between channels. A multimodal AI fraud model is most effective when it has visibility across the full transaction journey, not just the authentication step.

Key Takeaways

AI fraud attacks against biometric payment platforms are growing in both volume and sophistication - injection attacks surged 9x in 2024 alone

Multimodal biometrics reduces fraud surface area, but only if the AI fraud models behind them are built correctly

Liveness detection quality, certified to ISO 30107-3 Level 2, is the non-negotiable baseline

Fusion strategy matters - score-level fusion with adaptive weighting outperforms decision-level approaches for fraud resistance

Adversarial training against synthetic attacks and behavioural biometrics are the two most under-deployed capabilities in most platforms

Model drift monitoring must be continuous - fraud patterns evolve faster than annual retraining cycles can keep up with

Explainability and audit trails are now a compliance requirement, not just a nice-to-have

The Bottom Line on AI Fraud in Biometric Payments

The biometric technology is largely not the problem. The AI fraud models that interpret and act on biometric signals - their training data, their fusion logic, their liveness checks, their monitoring - those are where the gap is.

The good news is that the tools to close this gap exist. ISO 30107-3 certification, adversarial training, behavioural biometrics, and score-level fusion are production-ready. The organisations deploying them systematically are seeing measurable reductions in fraud loss and false positives simultaneously.

The organisations that are not are leaving a widening gap that attackers - who benchmark their tools against live production systems - are actively measuring and exploiting.

Mantra Softech provides multimodal biometric identity and payment authentication solutions for enterprise and government deployments. For technical consultations on AI fraud model architecture, contact our team at mantrasoftech.com.

Frequently Asked Questions

AI fraud in biometric payment systems refers to attacks where criminals use artificial intelligence tools - including deepfakes, synthetic identities, and voice cloning - to bypass biometric authentication and authorise fraudulent transactions. The same AI powering face recognition can be used to generate a fake face that fools it.

Multimodal biometric systems combine two or more authentication signals, such as face recognition plus fingerprint scanning. A fraudster who can generate a convincing deepfake face still cannot simultaneously produce a matching spoofed fingerprint or behavioural pattern. Industry data shows multimodal systems provide 96% higher security than single-mode systems.

Liveness detection confirms the biometric input is coming from a real, physically present person rather than a photo, recorded video, 3D mask, or AI-generated deepfake. Without it, any biometric system is vulnerable to even basic spoofing attacks. Passive liveness detection does this transparently in the background with no added friction for the user.

ISO/IEC 30107-3 is the international standard for Biometric Presentation Attack Detection. It defines how biometric systems are independently tested for their ability to resist spoofing attacks from printed photos up to advanced deepfakes and 3D masks. iBeta Level 2 certification under this standard is the current benchmark for financial-grade biometric systems.

American consumers alone lost $12.5 billion to fraud in 2024-2025, a 25% increase year-on-year. Globally, Juniper Research forecasts that online payment fraud losses will cumulatively surpass $362 billion between 2023 and 2028. The U.S. Treasury's AI-powered fraud detection programme prevented and recovered over $4 billion in FY2024.

Camera injection attacks occur when a fraudster replaces the real hardware camera feed with a synthetic or pre-recorded video stream at the software driver level. The biometric system receives what appears to be a live camera input but is actually a deepfake or replayed recording. These attacks surged 9x in 2024, with virtual camera exploits spiking 28x in the same period.

Model drift occurs when the statistical distribution of inputs that a fraud model encounters in production shifts away from its training data - because fraud patterns have evolved, user behaviour has changed, or both. A model that was highly accurate at deployment can silently degrade over time. Regular drift monitoring and scheduled retraining are necessary to maintain detection performance.

Comments